Effectively automating infrastructure is no longer a luxury but a staple in the enterprise move through future transformation. I wrote a blog recently about using Terraform with Packer together, and wanted to take this thought further with breaking down Terraform Modules and getting well connected with Terraform Cloud. I recently put together a simple module for building base infrastructure in AWS for the purpose of testing Alkira Network Cloud. Let’s dive in!

What Is A Terraform Module?#

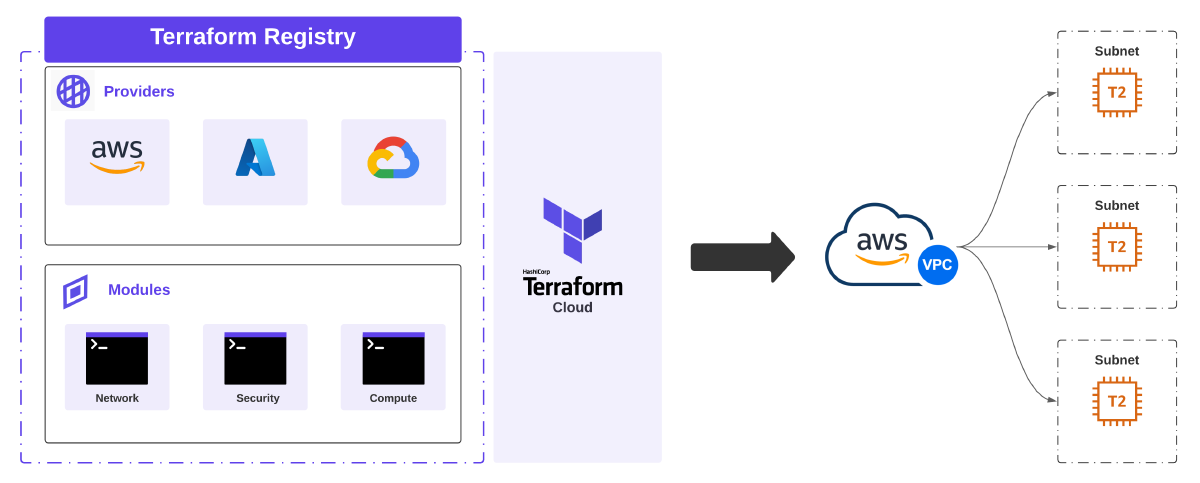

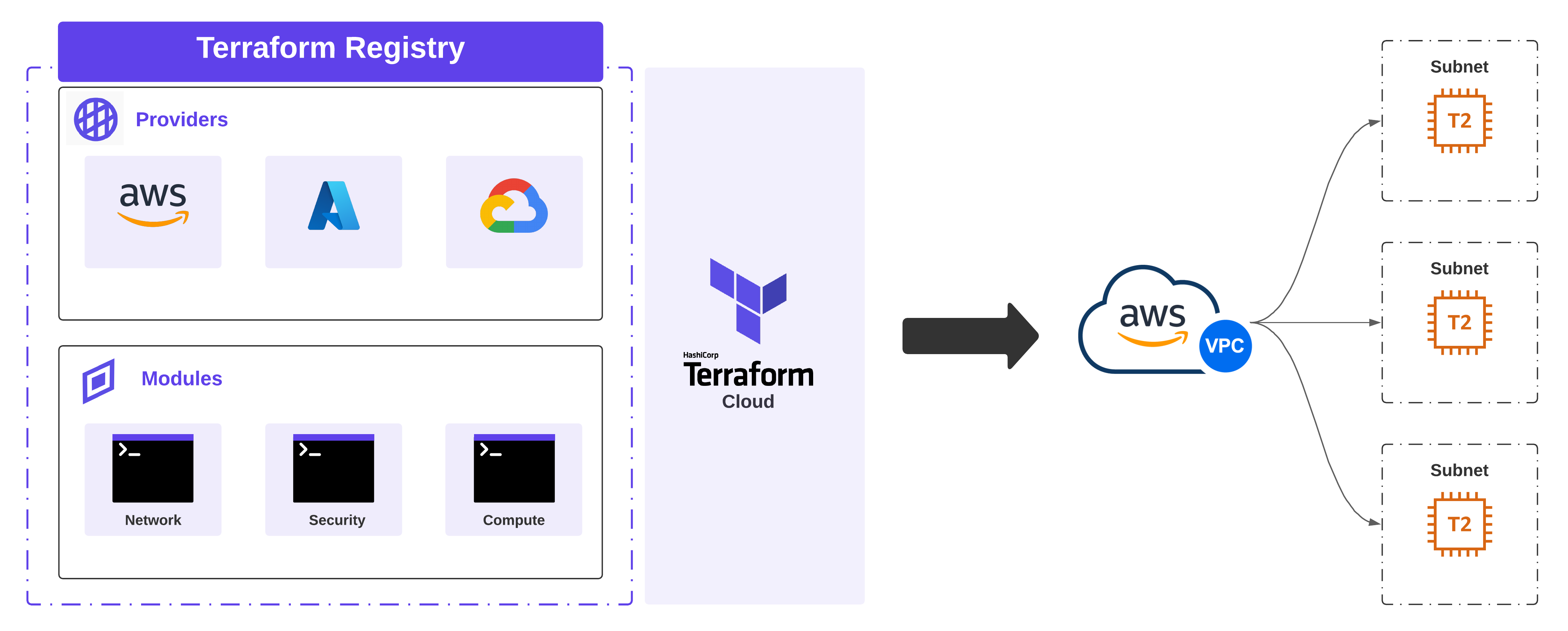

If striving to build repeatable blocks of infrastructure that get provisioned consistently is the goal, then getting acquainted with Terraform Modules can help you get there. Deploying cloud infrastructure means deploying resources that depend on each other, are generally deployed together, and share the same lifecycle. This is what Terraform modules do - enable the packaging and management of common resources together, extending reuse and environmental consistency.

Modules are managed in a version control system like Github and published to Terraform Registry. Terraform’s Enterprise and Cloud variant have a private registry, making it an ideal vehicle for building, sharing, and managing internal modules for an organization. Furthermore, once a given module is published, it can be used in tandem with other modules to build purpose-based workspaces. For this example, I’m going to use the public registry.

Creating A Custom Module#

For my testing, I needed the flexibility to create a new AWS VPC in one or more regions, provide a dynamic list of subnets to be provisioned, and also create a lightweight EC2 instance per subnet. However, setting these environments up by hand takes time, and tearing down all the infrastructure manually takes even more time. Plus, I want to use this configuration in tandem with similar scenarios in other cloud providers while testing and demonstrating Alkira.

Version Control#

The following repository was created to hold my work on Github. Module components include:

- main.tf - Primary logic which describes the infrastructure I want to build

- variables.tf - Required input variables which must be set in the module block

- outputs.tf - Variables that can be exposed for other Terraform configurations to use; These act similar to return values in programming languages

- versions.tf - Acceptable versions of Terraform and the provider that work with my custom module

Creating A Release#

Terraform public and private registry expects release tags that can be used to identify module versions:

Publishing A Module#

Publishing the new module couldn’t be easier. When going through the setup, it will ask to connect your Github account to the registry. If uploading to the public registry, only access to your public repositories will be needed.

Terraform Cloud#

Terraform Cloud makes provisioning easy. I set up the following repository to test out the new module. To run the new module, we need two files:

| |

| |

Create A Workspace#

Before applying any infrastructure, we must create a new Workspace in Terraform Cloud.

Apply Infrastructure#

After creating the Workspace and populating the appropriate variables, we can provision our desired infrastructure:

Destroy Infrastructure#

Running cloud infrastructure that isn’t being used is a great way to rack up unwanted costs. So when testing is completed, let’s destroy our infrastructure.

Conclusion#

Delivering automation in the context of complete environments deployed intact heralds a whole new world of possibilities. Terraform Modules simplify the building blocks of immutable infrastructure, and Terraform Cloud enhances the ability to deliver and iterate. Stay tuned for new content coming that showcases the power of Terraform driving Alkira Network Cloud.