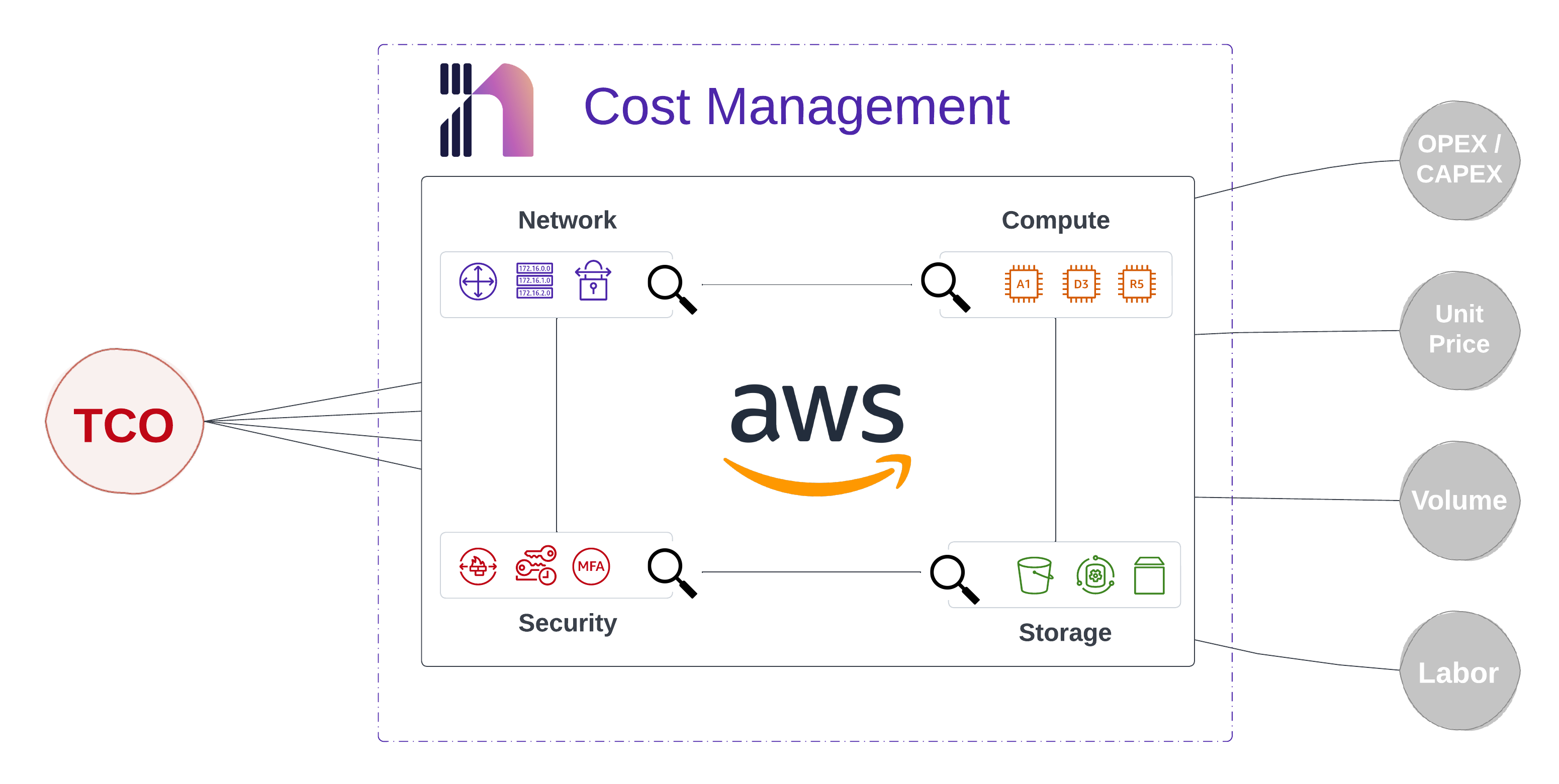

Blowing out cloud spend is an easy thing to do. This McKinsey Report notes that 80% of enterprises consider managing cloud spend a challenge. I recently presented at the Cloud Security Alliance in Kansas City and had the opportunity to network with some tremendous DevOps and Security professionals. One excellent side conversation somehow transitioned to a deep discussion on better ways to understand cost implications in the era of infrastructure-as-code. Shouldn’t cost be someone else’s problem?

Cost is a Shared Responsibility#

As many organizations continue shifting workloads to the cloud, the cost impacts the bottom line. The responsibility of cost-management now transcends the CIO and accounting straight down to individual engineers. If this sounds scary, fear not. It is an incredible opportunity in the making.

Where do Engineers Work?#

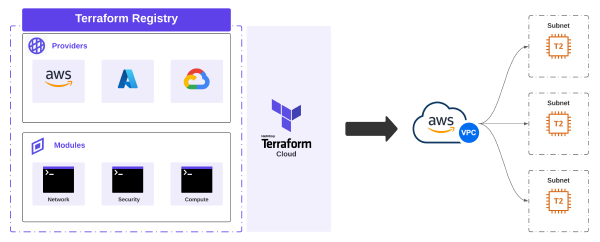

Engineers do not work in spreadsheets, nor do they work with accounting software. Most software engineers work day-to-day in version control. Furthermore, the centralized teams managing cloud infrastructure more broadly live in this world as well. Version Control often employs an approval process before a pull request is merged, and infrastructure is provisioned. What if you could see the cost impact right here? Yes, right where you, the engineer live? This is what Infracost does.

Prerequisites#

First, follow the instructions found here to download and authenticate Infracost. This includes creating an org inside the platform, which is where you can fetch the API key. Then we need a quick way to spin up some small AWS instances and then quickly dial them up to more expensive options.

AWS Configuration#

I’ll be using the following Terraform configuration to build the AWS infrastructure. I’ll keep the dynamic portions of the configuration in a separate locals block so we can easily adjust for testing.

| |

Let’s Test via CLI#

First, let’s do a terraform plan against the following criteria:

| |

Running a Cost Estimate#

Now, let’s run a cost estimate with Infracost and provision an instance:

Once this is done, we can check out the results on the Infracost portal:

Running a Cost Diff#

Bigger and more expensive is better, right? Let’s update our configuration with a few changes. Let’s switch that t2.micro to an m5.24xlarge, and let’s see how much it would cost to provision four of them:

| |

This time, we will tweak the command to generate a diff:

Once we navigate back to the portal, we can see the cost change. I have gone from a monthly cost of $9.27 up to a panic-inducing $13,449. Maybe I don’t need the m5.24xlarge instances for this testing!

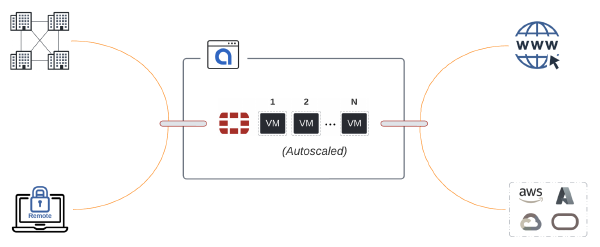

Testing with GitHub Actions#

In the spirit of shifting-left, let’s have a go with GitHub Actions running against every pull request. A lot of spending happens outside production, so this is an excellent way to control spending right at the source. If something doesn’t get deployed, it can’t cost you anything.

GitHub Actions Workflow#

By default, it will execute the typical Terraform init and plan. Then it will run terraform show -json plan.tfplan and save the output to plan.json. Then, Infracost can run its calculations. This will be populated in the conversation log along with everything else being tested as part of the pipeline.

| |

Creating a Pull Request#

Once we create any pull request, the workflow will run and populate the cost details. At this point in the workflow, multiple approvals can be added. Three sets of eyes are better than one when spending so much with the click of a button! You can find the supported CICD platforms in the Infracost documentation.

Conclusion#

Understanding TCO in the cloud is a deep topic that spans a whole organization. It can be easy to keep provisioning EC2 instances when you are disconnected from the cost. The ability to see the cost in the pipeline is a fantastic way to practice due diligence on the technical side of responsibility.

Arming engineers with the right tooling and knowledge will help drive cost-conscientious decisions. Small steps like this, along with driving continuous governance strategically, are significant steps in getting control of cloud spend.