The discourse on platform engineering Twitter and Reddit has been pretty consistent for months now: Terraform is dead, HCL is dead, the entire Infrastructure as Code stack is about to get vaporized by autonomous agents that translate intent directly into cloud provider API calls. The 24-month clock is ticking. Pack up your .tfstate and go home. Another DEAD DEAD DEAD narrative emerges.

I’m sympathetic to part of this. The friction of writing HCL by hand, getting the modules right, fighting state lock contention, debugging a failed apply on something at massive scale (at 1AM) - that whole experience has always been somewhere between tedious and miserable. If an agent can just… do it… why shouldn’t it be so?

I think this narrative mistakenly conflates two different things. The authorship of infrastructure with the lifecycle management of it. AI is going to obliterate the first one. I believe it already has. It’s nowhere close to obsoleting the second. And anyone shipping an enterprise cloud estate without lifecycle management is one bad afternoon away from a postmortem they don’t want to write.

This is the case for AI through IaC - not AI instead of it. Common sense needs to make a big resurgence.

This is a hard topic to digest. Even having a productive conversation about it (especially if you build products in the automation space) takes practical, lived time building infrastructure at scale with infrastructure provisioning tooling and patterns. That’s a real shift in mental models if you’ve spent your career in the configuration management world. My take is that the lack of provisioning reps, coupled with heavy muscle memory around mutable automation patterns, is what drives most of this frame of thinking - with a nice injection of AI hype poured into the cocktail.

The Argument Sounds Right (At First)#

Let’s steelman the death-of-HCL position because it isn’t crazy. It just isn’t complete.

The intent-to-execution stack we’ve operated in for a decade looks like this: human types HCL → CLI builds a plan → provider plugins translate to API calls → cloud responds → state file updates. Five layers of abstraction between I want a VPC and the VPC exists. Every layer exists for a reason, but every layer is also a tax.

LLMs short-circuit a lot of that. Modern agents can read a cloud provider’s API spec, reason over the schema, and emit valid calls without any DSL in the middle. The agent is the new compiler. Natural language is the new source code. Cloud APIs are the new runtime. Why pay the HCL tax if the agent doesn’t need the syntactic sugar?

There’s even a token-economics argument here that’s harder to dismiss than I’d like. Most production HCL lives in private repos that LLMs have never seen, and AI Terraform synthesis at production-grade complexity is meaningfully worse than what we see for general-purpose code. Meanwhile, agent-native context formats like AGENTS.md and MCP servers (which can pull live context from environments, Confluence, SharePoint, and just about anything else with an API) are starting to show real runtime gains just by shortening the distance between an agent and the truth. If the primary author of infrastructure is increasingly an agent, optimizing the format for humans is starting to feel a little nostalgic.

What Dies When the State Dies#

Then I think about the state file.

The state file is the part of the IaC stack that doesn’t get celebrated (always the bridesmaid, never the bride), but it does do a ton of the heavy lifting. It’s the mapping from what you said you wanted to what’s actually running in the cloud. Without it, an agent calling CreateSubnet has the memory of a goldfish.

This matters because cloud APIs aren’t idempotent in the way IaC is. And the agent’s own context isn’t either - it fills up, gets cleared, gets compacted, and the agent quietly forgets it already did half the work an hour ago. Declarative tools achieve idempotency by maintaining a record of what they’ve already created and only applying the delta. Raw API calls don’t work that way. CreateSubnet will happily create a new subnet every time you call it, regardless of whether one already exists. Run it three times and you’ve got three subnets, three NAT gateways billed against you, and three sets of route table entries that don’t agree with each other.

Strip away the state file and the agent has two options. Neither one is great:

- Continuous Discovery: Read the entire cloud environment before every action to figure out what already exists. This is computationally expensive, hits API rate limits at real scale, and is wide open to race conditions when multiple agents operate in parallel.

- External State: Maintain its own database of resource mappings. Which is reinventing the state file in a proprietary format, except now nobody has tooling for it and your agent is the only thing that can read it.

This is the part of the API-only pitch that always quietly disappears. It’s not that state is bad. It’s that state is load-bearing, and the loud minority arguing for its death tend to skip past the part where they explain what replaces it.

The Real Direction: State Becomes a Graph#

The real answer is that the state file doesn’t go away - it grows up - (or glows up? I need to be more trendy with my writing).

What’s emerging is a shift from static .tfstate JSON to real-time relational graphs of the live environment. HashiCorp’s Project Infragraph is one of the more visible bets in this direction, with System Initiative and a handful of newer entrants pushing further into “graph of graphs” territory - because once you’re operating at scale, inter-graph dependencies get genuinely thorny and that’s exactly the kind of context an agent needs to write reliable changes. The pitch is straightforward: instead of a flat file you read after a successful apply, you maintain a continuously synced graph that connects resources, applications, and ownership metadata. Agents query the graph to reason about what’s actually out there before they act on it.

| Feature | State File | Real-Time Infrastructure Graph |

|---|---|---|

| Data structure | Static JSON | Dynamic relational graph |

| Update mechanism | Post-execution write | Continuous sync / discovery |

| Primary consumer | CLI tool (Terraform) | AI reasoning engines |

| Granularity | Managed resources only | Entire environment (managed + unmanaged) |

| Dependency mapping | Directed acyclic graph | N-dimensional relational links |

The graph is what makes Day 2 operations possible for an agent. A DAG tells you the subnet depends on the VPC. A graph tells you the subnet depends on the VPC, the workload running in it, the team that owns the workload, the on-call engineer, the cost center, and the compliance tags applied to the data flowing through it. This has great potential to be the context an autonomous agent (or a human, frankly) actually needs to make a non-stupid decision at 2AM.

A few startups are leaning further and operating without persistent state at all - treating live cloud APIs as the only source of truth and doing in-memory delta calculation on every action. It’s a clean architectural idea. At scale you immediately run into the same problem: querying thousands of resources across multiple regions takes seconds to minutes, not milliseconds. Real-time starts to mean eventually pretty fast.

“Stateless” is doing a lot of work in some of these pitches. What’s actually being proposed is externalized state - the cloud provider’s database becomes your state store. That works fine until you need to know something the cloud provider doesn’t track, which in my experience is roughly the entire reason teams adopt IaC in the first place.

MCP Reinforces IaC#

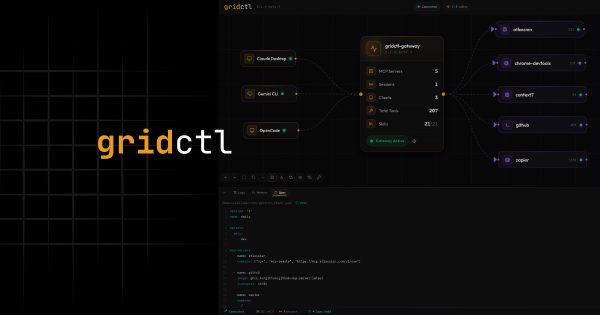

The other piece of the death-of-IaC argument is the Model Context Protocol - and this one’s interesting because I’m a believer in MCP. I’ve written about it a ton. I even built Gridctl - an OSS local dev tool for rapid prototyping. The protocol is genuinely the right answer to the N×M integration problem of connecting agents to tools.

The thing nobody seems to want to engage with is that the most useful MCP servers in the infrastructure space aren’t the ones that bypass IaC. They’re the ones that make IaC better.

Take the Terraform MCP server as the cleanest example. It hands an agent direct access to official provider documentation, valid resource schemas, and policy requirements - everything needed to generate Terraform that actually works on the first try. The state file, plan/apply cycle, and provider system stay exactly where they are. What changes is who’s writing the code: an agent with access to the same primary sources a senior engineer would consult, instead of a human banging out HCL by hand.

In this model, IaC is no longer the user interface. It’s the assembly language. The agent compiles natural language down to HCL the same way a C compiler compiles down to x86. The HCL is still there, still committed, still reviewable, still auditable - it just isn’t authored by a human anymore.

That’s not the death of Terraform. That’s Terraform being given the opportunity to get out of the way.

The Trust Boundary Is Why HCL Survives#

Here’s the part the death-of-IaC crowd consistently underweights: the technical capability of an agent to call an API directly does not equate to the organizational permission to do so. This is a hard one to understand for those out there who haven’t worked in a large enterprise or highly regulated market like healthcare.

Production infrastructure changes are not git commits. They’re not reversible by a revert. Deleting a database doesn’t roll back. Misconfiguring a security group can leak customer data in seconds. The reason every serious organization runs IaC through GitOps isn’t because HCL is a beautiful syntax - it’s because the workflow gives you a trust boundary. The PR is the boundary. The plan is the boundary. The approval is the boundary.

Strip that out and the governance story collapses in three VERY predictable ways:

- Auditability disappears into CloudTrail. A raw API call becomes one log entry among millions. An HCL change in Git is a permanent, searchable, attributable record of who wanted what, when, and why. I’d rather grep a repo than try to reconstruct intent from telemetry.

- Pre-apply validation gets harder. Tools like OPA and Conftest work because there’s a static artifact to validate before anything touches the cloud. Move policy enforcement to runtime API interception and you’ve made the problem an order of magnitude more complex.

- Blast radius control evaporates. HCL modules let you codify golden paths and restrict the option space available to whoever’s invoking them. Hand an agent direct API access and you’ve handed it the entire IAM-permitted surface of your cloud account - which is approximately everything.

The speed argument cuts both ways. AI moving at machine speed through direct API calls means drift accumulates faster than any human-led governance model can detect. “AgentOps” without guardrails ends up in a place where your cloud changes hundreds of times a day and nobody can reconstruct why - which breaks both the audit story and the cost story simultaneously.

What Actually Happens Over The Next 24 Months#

If I had to hypothesize and try to find a middle ground on where this lands, it’s not a binary. It’s a layered model with different rules that are directly proportionate to a specific blast radius. As it turns out, we still need to think about architecture and risk tolerance.

| Trend | Mechanism | Practical impact |

|---|---|---|

| State becomes graphs | .tfstate → real-time relational graphs | Agents finally have context for autonomous Day 2 Ops |

| MCP eats provider plugins | Standardized agent-to-infrastructure protocol | Universal tools replace bespoke integrations |

| Stateless prototyping | Discovery-based provisioning for sandboxes | Less CI/CD toil for ephemeral dev environments |

| Automated codification | Agents reverse-engineer cloud state into IaC | HCL becomes the audit trail, not the input |

For sandboxes, prototypes, and short-lived dev environments, agents will absolutely operate through direct APIs. Great science-experiment territory! The blast radius is small, the recovery cost is trivial, and the speed gain is real. We’re already seeing this pattern in tools that offer a “promote to Terraform” path - prototype fast against the cloud, then codify when something is ready to go to production. That’s a sane separation of concerns and I love it.

But for production - the part of the cloud that matters for compliance, disaster recovery, and the part of the bill that gets escalated to your CFO - the declarative, versioned, stateful model isn’t going anywhere. It’s just going to be authored by something faster than us non-robots.

If you’re a platform team trying to figure out where to invest right now, I’d think about it in two layers: harden your governance substrate (state, policy-as-code, provider schemas, audit pipelines) and let the authoring layer get displaced by agents. The substrate is the moat. The syntax is not.

So What’s the Takeaway#

Here’s where I land: the value of Terraform was never its syntax. HCL has been the part of Terraform people complained about for as long as Terraform has existed. The value was the governance engine - state tracking, plan/apply, provider abstractions, declarative reconciliation. That engine is exactly the substrate autonomous agents need to operate safely in environments where mistakes are expensive.

As the cost of creating code falls toward zero - as I keep hearing - then common sense dictates the value of verifying code MUST go up. It has to. Maybe asymptotically. The state file, the plan/apply cycle, the provider schemas, the policy gates - that’s the verification framework. That’s the part that doesn’t die. That’s the part that gets more important when the thing producing the code is moving faster than any human can read.

So no, I’m not packing up my .tfstate and going home. I’m watching the authoring layer collapse into the agent and the governance layer harden into something agents can be held accountable to. The interface changes, not the substrate.

AI through IaC, not instead of it. The state file remains the anchor for a system that can finally observe, reason, and act at the speed of intelligence - safely.