Let me paint you a picture. It’s 2025. You’ve discovered that you can describe a feature in plain English and an LLM will just… build it. The dopamine hit rivals or even eclipses social media. You feel as if you’re shipping things in an afternoon that used to take a week. You’re not reading diffs. You’re not understanding the internals. You’re just vibing - and it feels amazing.

Fast forward a few months. You try to add a feature to what you built. Or a teammate asks you to walk them through it. Or, worst of all, something breaks in production at 2AM and you’re staring at 3,000 lines of AI-generated code you’ve never read - that’s the hangover.

The industry has a name for what we were doing: vibe coding. Andrej Karpathy coined the term in February 2025 - a post that racked up 4.5 million views - and it perfectly captured the spirit of the moment. Describe what you want. Accept the output. Don’t read the diffs. Just trust the vibes and everyone’s happy. Speed over quality. Speed over security.

Here’s the thing though: even Karpathy, in his MenuGen vibe coding post, acknowledged just how quickly the complexity compounds. The code was fine. The surrounding infrastructure, integrations, and config? A mess he admitted he didn’t fully understand. That should tell us something.

The Numbers Are Kind of Brutal#

I tend to avoid AI fear-mongering. There’s enough of that going around. But ignoring data that directly affects the quality and security of what we ship isn’t optimism - it’s just not looking at the thing. If you fancy sticking your head in the sand and ignoring reality, then don’t read any further.

Let’s look at the thing.

| What we measured | What we found | Who measured it |

|---|---|---|

| AI-generated code with security vulnerabilities | 45% | Veracode 2025 |

| Java AI code failure rate | 70%+ | Veracode 2025 |

| XSS vulnerability rate vs. human-written code | 2.74x higher | CodeRabbit Dec 2025 |

| XSS protection failure rate in AI code | 86% | Veracode 2025 |

| Experienced devs slower with AI tools (actual) | 19% slower | METR RCT Jul 2025 |

| How much faster devs believed they were | 24% faster | METR RCT Jul 2025 |

| Code duplication increase (2020-2024) | ~4x | GitClear |

| Outstanding technical debt (current estimates) | $1.5 trillion | Analyst estimates |

That METR study is the one that should make you set down your coffee. It was a randomized controlled trial with experienced open-source developers - not juniors who don’t know better. They were 19% slower with AI tools while simultaneously believing they were 24% faster. A 43-point gap between perception and reality. We’re building fragile, vulnerable code and feeling great about it.

The problem was never AI-assisted development. The problem was unstructured AI-assisted development. We handed powerful tools to smart people and said “go vibe” without giving those tools any contracts to honor, any guardrails to stay inside, or any specifications to validate against. What did we think was going to happen? Common sense anybody?

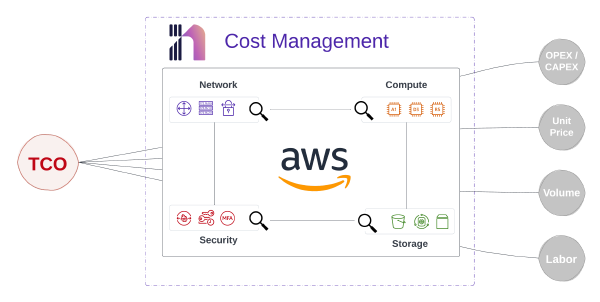

This Isn’t Actually New - Infrastructure Teams Already Know This#

Here’s what’s interesting to me as someone who’s spent a lot of time in all manners of infrastructure automation: spec-driven development isn’t a new idea. It just finally has a name that sounds exciting. We love excitement don’t we?

If you’ve ever written an OpenAPI spec before touching your API implementation, you’ve done spec-driven development. If you’ve defined a Protobuf schema before building a gRPC service, same thing. Network engineers have been doing this for decades. An RFC defines a protocol before anyone implements it. A YANG model describes a network device’s configuration schema before a single CLI command is written. An Ansible role’s interface is defined before the tasks are authored.

Specifications first, implementation second. That’s just how infrastructure has always worked. Application developers are only now catching up.

What’s changed is why it matters so much more right now. When AI is generating your implementation, the spec isn’t just documentation - it’s the only persistent, machine-readable truth that keeps the AI grounded. Without it, you’re just hoping the model remembers your intent from one prompt to the next. Spoiler: it doesn’t.

What Spec-Driven Development Actually Looks Like#

The core idea is simple: write a formal, machine-readable specification first. That spec becomes the single source of truth that drives everything downstream - code generation, testing, documentation, validation, and AI agent guidance. The spec takes precedence over code. Code serves the spec.

Birgitta Böckeler at Thoughtworks lays out three maturity levels that I find useful:

- Spec-first: Write the spec, then use it during development as a reference

- Spec-anchored: Keep the spec alive after initial development for ongoing evolution

- Spec-as-source: The spec is the only thing a human edits; code is generated from it

That third level is where the most forward-thinking teams are headed. And honestly, it’s where infrastructure teams have been operating for years - we just called it “declarative intent” instead of spec-as-source.

What This Looks Like in Practice#

The workflow has two flavors depending on whether you’re using traditional tooling or AI agents. Both start in the same place: the spec.

Traditional path: Define your spec (OpenAPI, AsyncAPI, JSON Schema, Protobuf), validate it with a linter like Spectral, generate server stubs and client SDKs with something like OpenAPI Generator (40+ languages supported), implement the business logic inside the generated scaffolding, and run contract tests to verify the implementation honors the spec. When requirements change, update the spec first, regenerate, iterate.

AI-assisted path: Write your structured spec. An AI agent reads it and generates an implementation plan, breaking the work into discrete, reviewable tasks. The agent executes each task while you review, test, and validate. When something changes, you update the spec - never the generated code directly - and the agent regenerates from the updated source of truth.

The second path is what makes this so powerful right now. The spec isn’t just a design artifact anymore. It’s an instruction set for an agent that can move much faster than most human developers.

Show, Don’t Just Tell: A Concrete Example#

Let me make this tangible. Here’s a minimal OpenAPI spec for a task tracking API (yes, I generated this spec with claude code to save time)

| |

From this single YAML file, you can generate a Go server with routing, a Python client SDK, interactive API docs, a mock server for frontend development, and contract tests - all before writing that business logic. Hand this spec to an AI coding agent alongside a structured instruction file, and you’ve given the model everything it needs to generate much higher quality, spec-compliant code on the first pass. No vibes required.

The Connective Tissue: Instruction Files#

Here’s where it gets interesting. A spec tells the AI what to build. But how do you tell it how to build it - which patterns to follow, which conventions to honor, which mistakes to avoid in your codebase specifically?

That’s where structured instruction files come in. Over the past year, a new category of engineering artifact has emerged: persistent, versionable, shareable instruction files that live alongside your code and give AI agents the context they need to operate effectively.

| Tool | Instruction File | Format |

|---|---|---|

| Claude Code | CLAUDE.md | Markdown |

| OpenAI Codex | AGENTS.md | Markdown |

| GitHub Copilot | .github/copilot-instructions.md | Markdown + YAML |

| Cursor | .cursor/rules/*.mdc | Markdown Component |

| AWS Kiro | Steering files | Markdown |

| Agent Skills Standard | SKILL.md | YAML frontmatter + Markdown |

Two of these have reached something resembling industry-standard status. AGENTS.md is now stewarded by the Agentic AI Foundation under the Linux Foundation (launched December 2025), with founding members including Anthropic, OpenAI, and Block. Think of it as a README for AI agents - project-wide instructions that any compliant agent can read and follow.

The Agent Skills Standard, originally developed by Anthropic and open-sourced in December 2025, takes a complementary approach. Skills are self-contained instruction packages that teach AI agents domain-specific workflows. They’re now adopted by OpenAI Codex, GitHub Copilot, Cursor, VS Code, Block’s Goose, and Spring AI.

The design insight I find compelling here is progressive disclosure. At startup, only skill names and descriptions are loaded (~50 tokens per skill). When a skill is triggered, the full instructions load (500-5,000 tokens). Scripts and reference files load only when needed. This is a real solution to the context window problem that makes monolithic system prompts unwieldy.

Specs and instruction files are complementary layers, not competing ones. The spec defines what to build. The instruction file defines how to build it. Together, they give an AI agent precise, persistent, validated context that ephemeral chat prompts can never match.

Vibe Coding vs. Spec-Driven: The Honest Comparison#

These aren’t competing religious factions, though everything with AI seems to be. I want to be clear about that. They sit at different points on a spectrum, and the right choice actually depends on what you’re building.

| Dimension | Vibe Coding | Spec-Driven |

|---|---|---|

| Speed to first prototype | Minutes to hours | Hours to days |

| Code quality | Unpredictable | Constrained by spec, verifiable |

| Security posture | 45% vulnerability rate | Contract-tested, lintable |

| Maintainability | Black-box codebases | Spec serves as living docs |

| Team collaboration | Inherently individual | Enables parallel development |

| AI guidance quality | Ephemeral prompts | Persistent, structured context |

| Error handling | Copy-paste errors to AI | Automated contract testing |

| Enterprise readiness | Not production-grade | Built for governance |

| Skill requirement | Prompting ability | Architecture + spec writing |

The fundamental tension is output speed vs. outcome quality. Vibe coding gets you something fast. Spec-driven development gets you something right.

For a weekend hack, speed wins - I’m not going to pretend otherwise. But for production systems that manage infrastructure, process financial transactions, or handle anything with an audit trail and a compliance requirement? “Right” wins every time and will often require more time with or without AI. The fact that we needed a data-driven study to remind us of this is, frankly, a little embarrassing. Let’s make using our brains great again!

Vibe coding’s speed is front-loaded. You ship fast, then spend weeks debugging, patching security holes, and rewriting when requirements shift. Spec-driven development invests time upfront getting the contract right, then moves faster in every subsequent phase because everything derives from the same source of truth. It’s the tortoise and the hare - except the tortoise has an AI copilot and the hare is carrying $1.5 trillion in technical debt.

The Spec-Driven Pipeline in Full#

When you combine specs and instruction files, the full governed AI development pipeline looks like this:

Human Intent → Specifications (OpenAPI, JSON Schema, AsyncAPI) → Instruction Files (CLAUDE.md, AGENTS.md, SKILL.md) → AI Agent → Validated, Spec-Compliant Code

Each layer narrows the AI’s degrees of freedom. Specs constrain what the code must do. Instruction files constrain how the code should be written. Validation ensures the output actually conforms to both. There’s no ambiguity, no hallucination risk on structural decisions, and no “I just trusted the vibes” moments in your production codebase.

One source I encountered while pulling this post together put it well: feeding structured specifications to LLMs eliminates ambiguity and sends a precise description to the model, which dramatically reduces the risk of hallucinations and bugs. That’s the entire argument in one sentence.

Where This Is All Headed#

The industry is converging fast. Karpathy moved from “vibe coding” to “agentic engineering.” GitHub built Spec Kit. AWS built Kiro. Anthropic and OpenAI collaboratively built the Agent Skills Standard. The Linux Foundation is hosting the governance infrastructure. Thoughtworks added spec-driven development to their Technology Radar.

These aren’t isolated signals. They represent a collective realization that AI-assisted development is incredibly powerful, but only when the AI is working from structured, validated, persistent specifications rather than ephemeral chat prompts. The future isn’t choosing between AI and engineering discipline. It’s using both, where the human architects the intent and the AI executes it faithfully.

And look - if you work in any form of infrastructure, none of this should feel foreign. We’ve been writing specs before implementation for years. YANG models, OpenConfig schemas, Ansible role interfaces, Terraform variable definitions. The mental model is the same. What’s new is that the consumer of those specs is increasingly an AI agent moving at a pace that humans can’t match.

The only thing that changes when AI enters the loop is that the cost of an underspecified contract goes up dramatically. Sloppy specs were always a maintenance problem. Now they’re also a correctness, security, and reliability problem.

So go ahead and vibe code your weekend projects. But when you’re building something that has to work every time, across real infrastructure, with teammates who need to understand it in six months - write the spec first. Your future self will thank you.