Here’s a question I got asked recently: If a skill can already call a REST API using Bash, why bother with MCP?

The surface-level answer is “MCP is cleaner.” That’s not wrong, but it undersells what’s actually different - and I think it’s a genuinely useful distinction to understand if you’re serious about building reliable agent workflows. Also, common-sense needs a resurgence given the massive amount of all old things are DEAD when new thing comes out clickbait that is proliferating on LinkedIn.

The Setup: Two Ways to Connect an Agent to the World#

When an AI agent needs to interact with an external system, you broadly have two approaches:

Skills with Bash calls: The agent executes shell commands - curl, API calls, whatever the skill instructs. The skill contains both the workflow logic and the API integration details. It works. A lot of useful things get built this way.

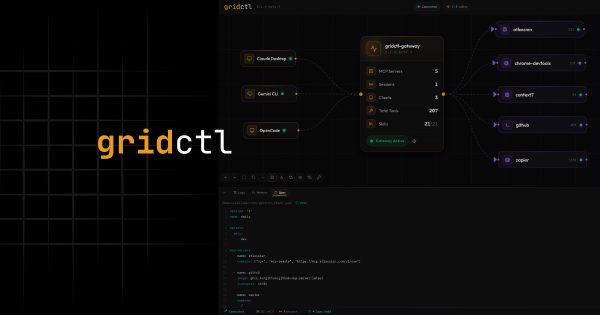

Model Context Protocol (MCP): The agent calls named, typed tools exposed by an MCP server. The server handles the actual API interaction. The agent never sees credentials, never executes arbitrary shell commands, and can only call the tools the server chooses to expose.

Both approaches get data in and out. But they’re not equivalent - and the gap matters more as your use cases get serious.

The Trust Boundary Is the Real Answer#

When a skill makes a REST call through Bash, the agent has shell access. Full shell access. It’s not making a bounded API call - it’s executing arbitrary commands that happen to be curl. You can’t audit exactly what ran. You can’t restrict what the agent is allowed to call. You can’t enforce rate limits or approval gates at the execution layer.

You’re trusting the skill instructions to constrain the agent’s behavior.

MCP flips that. The agent can only call the tools the MCP server exposes. Each tool is a bounded, named capability with a defined input schema. The MCP server becomes the enforcement point - it can implement rate limiting, logging, approval workflows, or business logic the agent never has to think about.

You go from “trust the agent not to do something bad with Bash” to “the agent literally cannot call anything outside this defined set of tools.”

That’s a meaningful security boundary, not just an aesthetic preference.

Infrastructure folks especially should recognize this pattern — it’s the same reason we use IAM policies instead of just asking engineers not to run certain commands. Intent is not enforcement.

Auth Never Enters the Agent’s Context#

This one is more subtle but important.

With skills and curl, auth tokens appear in the skill instructions, in the Bash commands, in the agent’s working memory. The agent handles credential management, token refresh, 401 recovery. This means credentials are floating around in context - passed through prompts, visible to logging, subject to whatever the model does with things in its context window.

With MCP, the server owns auth entirely. The agent calls a tool. It never sees credentials. The server handles token lifecycle, refresh, and recovery completely outside the agent’s awareness.

That’s not just cleaner code - it’s a genuine separation of trust concerns. Credentials belong in infrastructure, not in prompts.

The Interface Outlasts the Skill#

Here’s a maintenance argument that I find compelling.

If an API changes - endpoints move, auth schemes change, response shapes shift - every skill that hardcodes that endpoint breaks. And skills tend to hardcode a lot, because the skill is encoding both what to do and how to connect in the same artifact. Change one, and you’re touching the other.

With MCP, you fix the server once and every agent that uses it gets the update. The skills don’t need to change because they were never encoding the API surface in the first place. They were encoding workflow logic. The server was encoding connectivity. Those two things can now evolve independently.

This sounds simple until you’re managing a dozen skills across three teams that all talk to the same API. Then it’s the difference between a one-line server fix and a refactoring sprint.

The Thing That’s Easy to Miss#

Skills doing Bash calls work great as long as the agent has a shell. Some agent environments don’t. Sandboxed runners, browser-based IDEs, restricted cloud execution environments - they may not grant the shell access the skill assumes. MCP works anywhere the agent runtime supports it, which is increasingly everywhere.

But the more important thing isn’t the environment - it’s that the skill is doing double duty.

Every skill that makes a REST call is encoding two different kinds of knowledge in one place:

- Workflow knowledge: What to do, in what order, under what conditions

- Connectivity knowledge: How to auth, which endpoint, how to parse the response

These are different concerns that want to evolve at different rates. Workflow logic changes when business requirements change. API connectivity changes when the upstream service changes. Bundling them means every change touches code that probably didn’t need to change.

MCP gives those concerns their own homes.

A Way to Think About It#

The cleaner mental model for me is this: skills teach the agent to think, MCP teaches the agent to act.

Skills encode reasoning - when to do something, how to sequence decisions, what conditions to check, how to respond to results. MCP exposes capabilities - bounded, auditable, schema-validated actions the agent can take against external systems.

Right now, a lot of skills are doing both. That works. It’s how you get things running quickly, and there’s nothing wrong with shipping something that works. But it doesn’t scale cleanly once you’re managing multiple agents, multiple teams, or multiple environments - because the boundary between “what the agent knows how to think about” and “what the agent is allowed to do” should be explicit, not implicit in whatever commands a skill happens to execute.

Think of it like the difference between application code and infrastructure. You can hardcode a database connection string in your application. It works. But once you’re past a certain scale of complexity, you stop doing that - not because it stops working, but because the separation of concerns pays for itself.

Where This Matters Most#

If you’re building personal workflows, one-person tools, or prototypes - skills with Bash calls are probably fine. The overhead of an MCP server isn’t worth it for every use case. Right tool, right job.

But if you’re thinking about:

- Agent workflows that need to be auditable

- Credentials that shouldn’t float through prompt context

- Multiple agents or teams sharing the same external integrations

- Environments where shell access isn’t guaranteed

- APIs that change and shouldn’t require coordinated skill updates

…then MCP earns its keep quickly.

The pattern that’s emerging in practice is roughly: skills own the reasoning and workflow orchestration, MCP servers own the external connectivity and enforcement. Used together, they’re complementary layers - not competing approaches.

Skills and MCP aren’t really in competition. They’re answering different questions. Skills answer “what should the agent do?” MCP answers “what is the agent allowed to do, and how?”

Getting clear on that distinction is what takes agent workflows from “it works on my machine” to something you’d actually trust in production.