Open up the claude_desktop_config.json or mcp.json of the average AI tinkerer right now and tell me you don’t flinch. API keys sitting in plaintext. GitHub PATs with repo scope pasted next to a GitLab token that somebody will forget about in six months. A Slack bot token that absolutely should not be in a file backed up to iCloud. We collectively spent a decade teaching engineers not to do this - and then MCP showed up and everybody speed-ran the mistake all over again.

Local development is the path of least resistance. Everyone wants to ship faster too, which means shortcuts get taken. The quickstart says put your API key here, so you put your API key there, and the thing works, and you move on. But “it works on my laptop” has always been a shaky foundation, and when that laptop is now an agent with tools, browser control, and a willingness to follow instructions from a webpage, the blast radius of a leaked credential is bigger than it was last year.

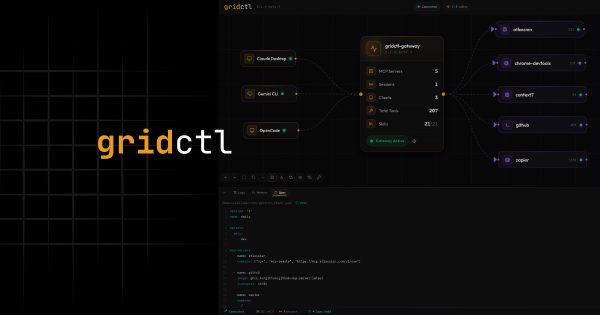

If you maintain anything in the FLOSS space, you already know this: sometimes you don’t know what you don’t know. That’s why I ran gridctl through the OpenSSF Best Practices process - less for the badge, more for the audit. The gaps it surfaced, and how I closed them, are what the rest of this post is about.

The OpenSSF Best Practices Program#

Let’s get this part out of the way first because it’s the easiest to hand-wave past.

OpenSSF Best Practices (formerly the Linux Foundation CII badge) covers everything from how your project handles security disclosures, to how dependencies are tracked, to whether your build pipeline actually runs static analysis. The passing tier alone is ~60 criteria. The silver and gold tiers are harder.

To me, the real value wasn’t the little green badge in the README. It’s the process of being forced to write down your answers and knowing where your gaps are. Sometimes those gaps exist because we’re moving too fast. Sometimes we just haven’t done this before.

You don’t get to say “we care about security” in the abstract. You have to point to the SECURITY.md. You have to link to your vulnerability scanning. You have to explain how a researcher would report an issue, and what the SLA looks like. It’s an audit of your governance, not just your code. For a project I run mostly solo, that kind of external forcing function is genuinely useful - it keeps me honest in the moments when shipping feels more fun than scanning.

If you maintain anything even moderately serious, I’d recommend going through it. The worst case is you discover a few gaps you didn’t know you had. The best case is you build a culture around fixing them.

The Real Problem: Secrets Sprawl in Local Dev#

Of the ~60 criteria, the one that mattered most for gridctl was how secrets are handled - not because the project itself has any, but because gridctl’s whole job is orchestrating MCP servers that do. Which forced me to be honest about the technical reality most of us are living in.

The typical MCP onboarding flow looks something like this:

| |

That token is now in:

- The config file itself (plaintext on disk)

- Your shell history if you ever

cat’d it to double-check - Any backup tool syncing your home directory

- Potentially your git history if you were in a hurry and didn’t

.gitignoreaggressively enough - The process env of any MCP client that launches the server, which means it’s visible to anything that can read

/proc/<pid>/environon Linux

Multiply that by the half-dozen MCP servers most people are running, across two or three different coding assistants, and you get a pretty bleak picture. The single worst part is that there’s no clean rotation story - if one of those tokens leaks, you have to grep every config file you’ve ever touched to find it.

This is the default experience, and it’s not a strawman. Plenty of popular walkthroughs still show the inline pattern as the happy path: copy-paste configs where you swap <YOUR_TOKEN> for the real thing. On the surface, that isn’t a bad thing. In reality, too many people blindly do it without thinking about the consequences.

Credit where it’s due - GitHub’s own github-mcp-server has since moved to OAuth and ${input:github_token} variable substitution, which is the right direction. But that’s the exception. The pattern most engineers are copy-pasting from is still token goes in the file, and every blog post reinforcing it is one more place the habit gets normalized.

To be fair, the MCP spec itself is not the villain here - it just defines a protocol for servers to advertise tools. The implementation conventions that popped up around it, especially for local dev, are where the hygiene went sideways. Security was an afterthought, which is how these things usually go.

How I Approach This With Gridctl#

Gridctl orchestrates MCP servers, bridges LLM clients, and injects skills via a registry. If I didn’t solve the secrets problem, I’d just be shipping an easier, more convenient version of a bad habit.

A Real Vault, Not a Dotfile#

The first rule is simple: secrets never sit in plaintext on disk. Gridctl ships with an encrypted vault backed by modern crypto primitives, not some hand-rolled XOR business.

From pkg/vault/crypto.go:

| |

Two things worth calling out:

- Argon2id is the KDF the password-hashing community has been pointing at for years. It’s the OWASP-recommended default for password hashing, and it’s resistant to both GPU and side-channel attacks in a way older KDFs like PBKDF2 aren’t. 64 MiB of memory per derivation is the expensive knob - it makes brute force painful without being unusable on a laptop.

- XChaCha20-Poly1305 for the actual encryption - an AEAD cipher with a 192-bit nonce, which is big enough that random nonces are safe without bookkeeping. The

golang.org/x/crypto/chacha20poly1305package is maintained by the Go security team. I didn’t want to get clever here.

The vault uses envelope encryption: a Data Encryption Key (DEK) encrypts the secrets, and a Key Encryption Key (KEK) derived from your passphrase encrypts the DEK. That separation means rotating your passphrase doesn’t require re-encrypting every secret - only the DEK gets rewrapped. It also means the DEK can live in memory only for as long as it takes to decrypt, which shrinks the window where key material is exposed.

References, Not Raw Values#

The second rule is: your config file should never contain a credential. Ever.

Gridctl stacks are defined in YAML. Instead of pasting a token anywhere, you attach a secrets set to the stack and let gridctl inject the values at load time:

| |

That’s the whole thing. No ${vault:KEY} expressions, no env block listing credentials the server needs, nothing. The GitHub MCP server ends up with GITHUB_PERSONAL_ACCESS_TOKEN in its environment because the dev set contains it - and the dev set lives in the encrypted vault, not the config.

The injection itself is small and readable. From pkg/config/loader.go:

| |

Two things I like about this: gridctl doesn’t write the decrypted value to disk - injection happens in-memory into the server’s env (what the container runtime does with that env afterward is its own concern), and the if _, exists check means an explicit env entry always beats a set value - so there’s no footgun where a wrong vault value silently shadows something you typed on purpose. You can commit your stack.yaml to a public repo and the worst anybody learns is the name of the set you’re using.

This is the same reference-not-embed pattern Terraform and other serious infrastructure tools settled on - because it’s the one that actually works. The nice thing about putting it in front of MCP is that it makes the right thing the easy thing. You don’t have to choose to be careful; the config file can’t hold a raw credential because there’s nowhere to put one.

This approach is far from how secrets should be managed at production scale. This serves mainly as a middle-ground between the idea of having things live in plain text during local development and the more complex approach of running an enterprise-grade secrets manager.

No Secrets in Logs. Ever.#

Here’s the one that catches most people.

You can do everything else right - encrypted vault, reference-only config, the whole deal - and then your logger helpfully dumps the decrypted value into stderr because somebody wrote log.Info("env", "GITHUB_TOKEN", val) during a debugging session. Congratulations, the secret is now in journalctl, in your shell scrollback, and quite possibly in whatever aggregator is slurping up logs for analysis.

Gridctl wraps the standard library log/slog handler with a redacting layer that enforces three things at once. From pkg/logging/redact.go:

| |

That’s the pattern-matching layer - it catches the obvious shapes (Authorization headers, Bearer tokens, anything that looks like api_key=...). The second layer registers every exact value currently in the vault as a string to redact, sorted longest-first so a short secret that happens to be a substring of a longer one doesn’t break replacement. The third layer specifically scrubs errors from third-party libraries (looking at you, go-git) that love to stuff tokens into error messages:

| |

Is this belt-and-suspenders? Yeah. That’s the point. Log redaction is exactly the kind of thing where one missed pattern is one credential in a logfile somebody ships off to a support ticket. I’d rather over-engineer this than find out the hard way.

If you’re building anything that handles secrets, assume your logger will betray you. Add redaction at the handler level, not at every callsite - callsite discipline is the first thing that breaks when someone’s debugging at 2am. Also, debugging at 2am should be illegal.

Scanning, Linting, and Other Unglamorous Work#

OpenSSF asks a lot about automated analysis - static scanning, vulnerability checks, CI gates. This is where gridctl’s pipeline earns those checkmarks and, more importantly, where the habit stays enforced after you stop paying attention. Every PR into gridctl runs through:

- govulncheck for symbol-reachable vulnerability scanning in Go dependencies. Crucially, it checks whether vulnerable code paths are actually called - not just whether a vulnerable version is in

go.sum. Suppressions live in a tracked script with a documented rationale, because “we decided this doesn’t apply” should never be invisible. - gosec via golangci-lint for Go-specific security patterns (unsafe crypto, command injection, file permissions).

go test -raceon every change, because concurrency bugs and security bugs often look alike.- Coverage gates that fail the build if test coverage drops below threshold - not because coverage equals quality, but because the ratchet has to go one direction.

None of this is revolutionary. All of it is tedious. That’s kind of the point - security is mostly the willingness to do tedious things consistently, not the discovery of clever tricks.

The Actual Takeaway#

AI has changed things, and it’ll keep changing them. That isn’t a good excuse to regress on security fundamentals. If anything, it’s the opposite - agents that can read files, hit APIs, and execute tools are the exact moment you want your credentials locked down, not scattered across a dozen .json files in your home directory.

The pattern is straightforward and it’s been the answer for years:

- Encrypt secrets at rest with real crypto you didn’t write yourself.

- Reference them from config, never embed them.

- Redact aggressively in logs, with enforcement at the handler, not the callsite.

- Run the scanners even when they’re annoying.

- Write it down so the next person (or the next you) can audit what you did.

The OpenSSF badge was useful to me less as a marketing artifact and more as a forcing function to write all of this down. If you’re building in this space, I’d genuinely encourage you to go through the process. You’ll find gaps. You’ll close most of them. You’ll have something to point at when someone - rightfully - asks how serious you actually are about the security of the thing you’re asking them to run.

The old rules still apply. MCP didn’t repeal them, and no amount of velocity will.